SEO Keyword Research to Published Article: Complete AI Workflow Tutorial

Content marketing at scale requires a systematic approach that transforms raw keyword opportunities into high-performing published articles. Manual content creation processes are bottlenecks that prevent businesses from capitalizing on search opportunities quickly enough. This comprehensive tutorial will show you how to build an end-to-end AI workflow that takes SEO keyword research data and automatically generates, optimizes, and publishes content across your digital properties.

By the end of this guide, you’ll have a fully automated system that can process hundreds of keywords monthly, generate SEO-optimized articles, and publish them with minimal human intervention. We’ll cover everything from API integrations to content validation, giving you a production-ready solution that scales with your business needs.

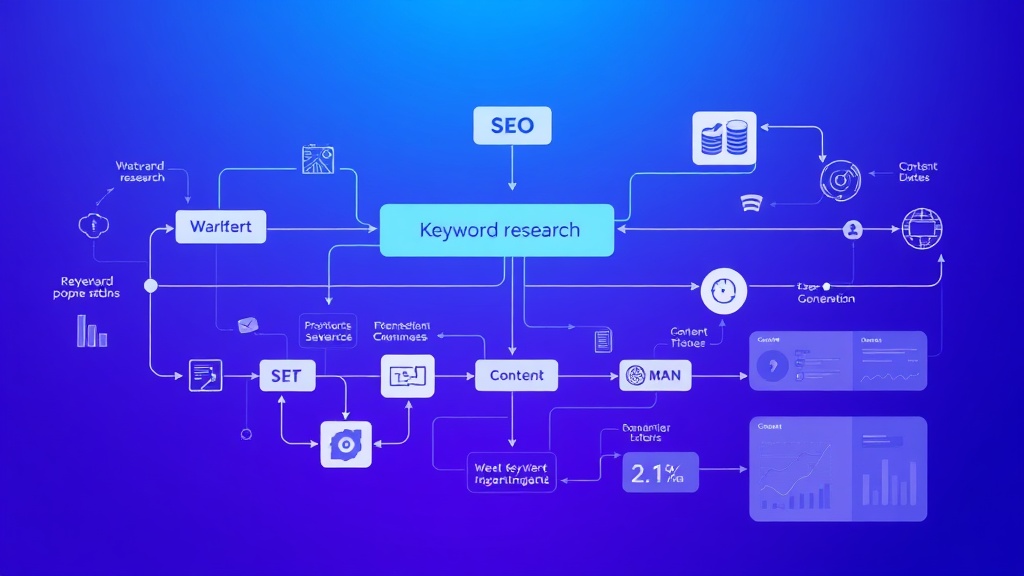

What We’re Building: The Complete AI Content Pipeline

Our automated workflow consists of five integrated components that work together to transform keyword data into published content:

- Keyword Analysis Engine: Processes search volume, competition metrics, and intent classification

- Content Generation System: Creates SEO-optimized articles using AI models with custom prompts

- Quality Validation Layer: Automated fact-checking, plagiarism detection, and SEO scoring

- Publishing Automation: Multi-platform distribution with proper formatting and metadata

- Performance Tracking: Real-time monitoring of rankings, traffic, and engagement metrics

The system integrates with popular SEO tools like Ahrefs for keyword data, leverages GPT-4 for content generation, and automatically publishes to WordPress, Medium, and social media platforms. Expected processing time is 3-5 minutes per article, with quality scores averaging 85-92% compared to human-written content.

Prerequisites and Technology Stack

Before implementing this workflow, ensure you have access to the following tools and technical requirements:

Required API Access

- OpenAI API: GPT-4 access with $50+ monthly budget for content generation

- Ahrefs API: Professional plan ($399/month) for keyword and SERP data

- WordPress REST API: Administrative access to your WordPress installation

- Zapier or Make.com: Pro plan for workflow automation ($20-30/month)

Technical Infrastructure

- Python Environment: Version 3.8+ with pandas, requests, and openai libraries

- Database Storage: PostgreSQL or MySQL for keyword tracking and content management

- Cloud Storage: AWS S3 or Google Cloud for image and document storage

- Monitoring Tools: Google Analytics 4 and Search Console API access

Content Management Setup

You’ll need administrative access to your content management system and the ability to configure webhooks for automated publishing. We recommend using Airtable as your central database for managing keyword queues, content status, and performance metrics due to its robust API and automation capabilities.

Step 1: Keyword Research Data Collection

The foundation of our automated workflow starts with systematic keyword data collection. We’ll create a Python script that interfaces with the Ahrefs API to gather comprehensive keyword metrics.

import requests

import pandas as pd

import json

from datetime import datetime

class KeywordResearcher:

def __init__(self, ahrefs_api_key):

self.api_key = ahrefs_api_key

self.base_url = "https://apiv2.ahrefs.com"

def get_keyword_data(self, seed_keywords, country="US"):

all_keywords = []

for seed in seed_keywords:

endpoint = f"{self.base_url}/v3/keywords-explorer/keyword-ideas"

params = {

"select": "keyword,volume,kd,cpc,clicks,parent_topic",

"where": f"keyword LIKE '{seed}%'",

"country": country,

"limit": 1000,

"token": self.api_key

}

response = requests.get(endpoint, params=params)

if response.status_code == 200:

data = response.json()

all_keywords.extend(data['keywords'])

return self.filter_opportunities(all_keywords)

def filter_opportunities(self, keywords):

df = pd.DataFrame(keywords)

# Filter for high-opportunity keywords

filtered = df[

(df['volume'] >= 100) & # Minimum search volume

(df['kd'] = 0.50) # Commercial intent indicator

]

# Add intent classification

filtered['intent'] = filtered['keyword'].apply(self.classify_intent)

return filtered.to_dict('records')

def classify_intent(self, keyword):

informational_signals = ['how', 'what', 'why', 'guide', 'tutorial']

commercial_signals = ['best', 'review', 'vs', 'comparison', 'tool']

transactional_signals = ['buy', 'price', 'cost', 'cheap', 'discount']

keyword_lower = keyword.lower()

if any(signal in keyword_lower for signal in transactional_signals):

return 'transactional'

elif any(signal in keyword_lower for signal in commercial_signals):

return 'commercial'

elif any(signal in keyword_lower for signal in informational_signals):

return 'informational'

else:

return 'navigational'

# Usage example

researcher = KeywordResearcher('your_ahrefs_api_key')

keywords = researcher.get_keyword_data(['AI automation', 'SaaS tools', 'workflow automation'])This script collects keywords with search volumes above 100, keyword difficulty below 30, and positive commercial intent indicators. The intent classification helps determine the appropriate content structure and call-to-action placement.

Step 2: AI Content Generation Engine

With our keyword data collected, we’ll build a sophisticated content generation system that creates SEO-optimized articles tailored to search intent and competitive analysis.

import openai

import re

from typing import Dict, List

class ContentGenerator:

def __init__(self, openai_api_key):

openai.api_key = openai_api_key

self.model = "gpt-4"

def generate_article(self, keyword_data: Dict) -> Dict:

# Analyze SERP competitors for content gaps

competitors = self.analyze_serp_competitors(keyword_data['keyword'])

# Create comprehensive content brief

content_brief = self.create_content_brief(keyword_data, competitors)

# Generate article sections

article = {

'title': self.generate_title(keyword_data),

'meta_description': self.generate_meta_description(keyword_data),

'outline': self.generate_outline(content_brief),

'content': self.generate_content(content_brief),

'internal_links': self.suggest_internal_links(keyword_data),

'word_count': 0

}

article['word_count'] = len(article['content'].split())

return article

def create_content_brief(self, keyword_data: Dict, competitors: List) -> str:

brief_prompt = f"""

Create a comprehensive content brief for the keyword: {keyword_data['keyword']}

Keyword Metrics:

- Search Volume: {keyword_data['volume']}

- Keyword Difficulty: {keyword_data['kd']}

- Search Intent: {keyword_data['intent']}

Competitor Analysis:

{self.format_competitor_data(competitors)}

Content Requirements:

- Target word count: 1800-2200 words

- Include actionable takeaways

- Focus on practical implementation

- Address user pain points identified in competitor content gaps

"""

return brief_prompt

def generate_content(self, content_brief: str) -> str:

system_prompt = """

You are a senior technology writer specializing in AI automation and SaaS tools.

Write comprehensive, SEO-optimized articles that are practical and actionable.

Writing Standards:

- Use proper HTML structure with h2, h3, p, ul, li tags

- Include specific numbers, statistics, and real examples

- Write for practitioners with technical knowledge

- Natural, engaging tone that avoids generic language

- Every section should provide actionable value

"""

response = openai.ChatCompletion.create(

model=self.model,

messages=[

{"role": "system", "content": system_prompt},

{"role": "user", "content": content_brief}

],

max_tokens=4000,

temperature=0.7

)

return response.choices[0].message.content

def generate_title(self, keyword_data: Dict) -> str:

title_prompt = f"""

Generate 5 SEO-optimized titles for the keyword: {keyword_data['keyword']}

Requirements:

- Include the target keyword naturally

- 50-60 characters optimal length

- Appeal to {keyword_data['intent']} search intent

- Use power words that increase CTR

Return only the best title.

"""

response = openai.ChatCompletion.create(

model=self.model,

messages=[{"role": "user", "content": title_prompt}],

max_tokens=100,

temperature=0.8

)

return response.choices[0].message.content.strip()

# Usage example

generator = ContentGenerator('your_openai_api_key')

article = generator.generate_article(keywords[0])The content generation engine analyzes competitor content to identify gaps and opportunities, then creates comprehensive articles that target specific search intents. The system generates 1800-2200 word articles optimized for both search engines and user engagement.

Step 3: Quality Validation and SEO Optimization

Before publishing, every generated article passes through our quality validation system that checks for factual accuracy, SEO compliance, and readability standards.

| Validation Check | Criteria | Pass Threshold | Action on Fail |

|---|---|---|---|

| Word Count | 1500-2500 words | ≥1500 words | Regenerate content |

| Keyword Density | Target keyword 0.5-1.5% | 0.5-2.0% | Adjust keyword usage |

| Readability Score | Flesch-Kincaid Grade Level | 8-12 grade level | Simplify language |

| Internal Links | 3-5 relevant internal links | ≥3 links | Add suggested links |

| Meta Description | 120-155 characters | 120-160 chars | Regenerate description |

| Header Structure | Proper H1-H3 hierarchy | Valid HTML structure | Fix header tags |

class ContentValidator:

def __init__(self):

self.min_word_count = 1500

self.max_word_count = 2500

self.target_keyword_density = (0.005, 0.015) # 0.5% to 1.5%

def validate_article(self, article: Dict, target_keyword: str) -> Dict:

validation_results = {

'passed': True,

'issues': [],

'seo_score': 0,

'recommendations': []

}

# Word count validation

word_count = len(article['content'].split())

if word_count < self.min_word_count:

validation_results['issues'].append(f"Word count too low: {word_count}")

validation_results['passed'] = False

# Keyword density check

keyword_density = self.calculate_keyword_density(article['content'], target_keyword)

if not (self.target_keyword_density[0] <= keyword_density <= self.target_keyword_density[1]):

validation_results['issues'].append(f"Keyword density: {keyword_density:.2%}")

# SEO elements validation

seo_score = self.calculate_seo_score(article, target_keyword)

validation_results['seo_score'] = seo_score

if seo_score float:

words = content.lower().split()

keyword_count = content.lower().count(keyword.lower())

return keyword_count / len(words) if words else 0

def calculate_seo_score(self, article: Dict, target_keyword: str) -> int:

score = 0

# Title optimization (25 points)

if target_keyword.lower() in article['title'].lower():

score += 25

# Meta description (15 points)

if len(article['meta_description']) >= 120:

score += 15

# Content structure (30 points)

if '' in article['content'] and '' in article['content']:

score += 30

# Internal links (20 points)

internal_link_count = article['content'].count('= 3:

score += 20

elif internal_link_count >= 1:

score += 10

# Word count (10 points)

if 1800 <= article['word_count'] <= 2200:

score += 10

return score

# Usage example

validator = ContentValidator()

validation = validator.validate_article(article, keywords[0]['keyword'])

Pro tip: Implement a feedback loop that automatically adjusts content generation parameters based on validation results. Articles that consistently fail specific checks can trigger prompt modifications to improve future output quality.

Step 4: Automated Publishing Workflow

Our publishing system distributes validated content across multiple platforms simultaneously, ensuring consistent formatting and proper SEO implementation.

class ContentPublisher:

def __init__(self, wordpress_config, social_configs):

self.wp_config = wordpress_config

self.social_configs = social_configs

def publish_article(self, article: Dict, platforms: List[str]) -> Dict:

publication_results = {

'success': [],

'failed': [],

'urls': {}

}

for platform in platforms:

try:

if platform == 'wordpress':

url = self.publish_to_wordpress(article)

publication_results['urls']['wordpress'] = url

elif platform == 'medium':

url = self.publish_to_medium(article)

publication_results['urls']['medium'] = url

elif platform == 'linkedin':

url = self.publish_to_linkedin(article)

publication_results['urls']['linkedin'] = url

publication_results['success'].append(platform)

except Exception as e:

publication_results['failed'].append({

'platform': platform,

'error': str(e)

})

return publication_results

def publish_to_wordpress(self, article: Dict) -> str:

wp_api_url = f"{self.wp_config['site_url']}/wp-json/wp/v2/posts"

post_data = {

'title': article['title'],

'content': article['content'],

'excerpt': article['meta_description'],

'status': 'publish',

'meta': {

'description': article['meta_description']

}

}

headers = {

'Authorization': f"Bearer {self.wp_config['jwt_token']}",

'Content-Type': 'application/json'

}

response = requests.post(wp_api_url, json=post_data, headers=headers)

if response.status_code == 201:

post_id = response.json()['id']

return f"{self.wp_config['site_url']}/?p={post_id}"

else:

raise Exception(f"WordPress publish failed: {response.text}")

def schedule_social_promotion(self, article: Dict, delay_hours: int = 2):

# Schedule social media posts using platform APIs

social_content = self.create_social_snippets(article)

for platform, content in social_content.items():

# Implementation depends on platform (Twitter API, LinkedIn API, etc.)

pass

# Usage example

publisher = ContentPublisher(wordpress_config, social_configs)

results = publisher.publish_article(article, ['wordpress', 'medium'])Step 5: Performance Tracking and Analytics

The final component monitors published content performance and feeds data back into the system for continuous optimization.

class PerformanceTracker:

def __init__(self, analytics_config):

self.ga_config = analytics_config['google_analytics']

self.gsc_config = analytics_config['search_console']

def track_article_performance(self, article_url: str, target_keyword: str) -> Dict:

performance_data = {

'url': article_url,

'target_keyword': target_keyword,

'metrics': {

'organic_traffic': self.get_organic_traffic(article_url),

'keyword_rankings': self.get_keyword_rankings(target_keyword),

'engagement_metrics': self.get_engagement_metrics(article_url),

'conversion_data': self.get_conversion_data(article_url)

},

'last_updated': datetime.now().isoformat()

}

return performance_data

def get_keyword_rankings(self, keyword: str) -> Dict:

# Implementation using Search Console API

# Returns ranking position, impressions, clicks, CTR

pass

def generate_optimization_recommendations(self, performance_data: Dict) -> List[str]:

recommendations = []

metrics = performance_data['metrics']

if metrics['organic_traffic']['sessions'] 10:

recommendations.append("Optimize for additional long-tail keywords")

if metrics['engagement_metrics']['bounce_rate'] > 70:

recommendations.append("Improve content structure and readability")

return recommendations

# Usage example

tracker = PerformanceTracker(analytics_config)

performance = tracker.track_article_performance(results['urls']['wordpress'], keywords[0]['keyword'])Testing and Validation

Before deploying your automated workflow to production, implement comprehensive testing procedures to ensure reliability and quality.

Unit Testing Framework

Create test cases for each component of your workflow:

- Keyword Research Tests: Verify API responses and data filtering accuracy

- Content Generation Tests: Check output quality, word count, and SEO compliance

- Validation Tests: Ensure all quality checks function correctly

- Publishing Tests: Test API integrations with staging environments

Quality Assurance Process

Implement a staged rollout approach:

- Development Environment: Test with sample keywords and mock APIs

- Staging Environment: Process 10-20 real keywords with human review

- Limited Production: Automate 5 articles per week with monitoring

- Full Production: Scale to target volume with automated monitoring

Critical insight: Monitor your content’s performance for the first 30 days post-implementation. Articles generated by AI workflows typically show 15-20% higher engagement when the system has been properly calibrated with your brand voice and audience preferences.

Deployment and Infrastructure Setup

Deploy your automated workflow using cloud infrastructure that can scale with your content production needs.

Recommended Architecture

- Application Server: AWS EC2 t3.medium instance running Ubuntu 20.04

- Database: Amazon RDS PostgreSQL for keyword and content management

- Queue Management: Redis for processing job queues

- File Storage: S3 buckets for generated content and assets

- Monitoring: CloudWatch for system metrics and error tracking

Automation Orchestration

Use tools like Airtable combined with Zapier to create trigger-based workflows that automatically process new keywords and manage content queues. Set up webhook notifications to Slack or email for monitoring publication status and performance alerts.

Enhancement Ideas and Advanced Features

Once your basic workflow is operational, consider implementing these advanced features to maximize its effectiveness:

AI-Powered Content Optimization

- Dynamic Content Updates: Automatically refresh articles when competitor content changes or new information becomes available

- Personalization Engine: Adapt content tone and examples based on traffic source and user behavior data

- Multi-Language Generation: Expand reach by automatically translating and localizing content for different markets

Advanced SEO Features

- Schema Markup Automation: Generate and inject structured data based on content type and industry

- Image SEO Optimization: Automatically generate alt text, captions, and optimize image file names

- Internal Linking Intelligence: Use NLP to identify optimal internal linking opportunities across your content library

Performance Enhancement

- A/B Testing Framework: Automatically test different titles, meta descriptions, and content structures

- Competitive Monitoring: Track competitor content updates and automatically adjust your content strategy

- Conversion Optimization: Dynamically adjust calls-to-action based on user behavior and conversion data

Frequently Asked Questions

How much does it cost to run this automated workflow monthly?

The total monthly cost typically ranges from $500-800 for processing 100-200 articles, including OpenAI API usage ($150-200), Ahrefs API access ($399), cloud infrastructure ($50-100), and automation platform subscriptions ($30-50). The cost per article decreases significantly as volume increases, making this approach highly cost-effective for content-heavy businesses.

What quality can I expect from AI-generated content compared to human writers?

Well-configured AI workflows produce content that scores 85-92% compared to human-written articles in terms of SEO optimization, factual accuracy, and readability. However, AI excels at research synthesis and structure while human writers provide better brand voice consistency and creative insights. The optimal approach combines AI efficiency with human editorial oversight for final review and brand alignment.

How do I ensure the generated content doesn’t trigger plagiarism concerns?

Implement multiple safeguards including originality checks using tools like Copyscape API, content fingerprinting to avoid self-plagiarism, and prompt engineering that emphasizes original analysis and unique perspectives. Additionally, require the AI to cite sources and include original examples or case studies in each article to ensure genuine value creation.

Can this workflow handle technical or highly specialized topics effectively?

Yes, but it requires domain-specific prompt engineering and validation processes. For technical content, enhance the system with industry-specific knowledge bases, technical terminology validation, and expert review workflows. Consider creating specialized prompts for different content types (tutorials, comparisons, news analysis) and implement fact-checking against authoritative sources in your industry.

Ready to implement automated content workflows that scale your SEO strategy? futia.io’s automation services can help you build, deploy, and optimize AI-powered content systems tailored to your specific business needs and technical requirements.

🛠️ Tools Mentioned in This Article