Complete Guide to Real-Time Data Pipelines with AI Processing

Real-time data pipelines with AI processing have become the backbone of modern digital businesses, enabling organizations to make split-second decisions that drive competitive advantage. From fraud detection systems processing millions of transactions per second to recommendation engines delivering personalized content in milliseconds, these systems power the most critical operations in today’s data-driven economy.

The global real-time analytics market is projected to reach $15.85 billion by 2025, growing at a CAGR of 30.5%. This explosive growth reflects the increasing demand for immediate insights from streaming data sources. Unlike traditional batch processing systems that analyze data hours or days after collection, real-time pipelines process information as it flows, enabling immediate responses to changing conditions.

This comprehensive guide will walk you through building production-ready real-time data pipelines with integrated AI processing capabilities. We’ll cover everything from architectural decisions to implementation details, providing you with the knowledge to design systems that can handle millions of events per second while delivering intelligent insights in real-time.

Prerequisites and Foundation Requirements

Before diving into implementation, ensure you have the following technical prerequisites in place:

Technical Skills and Knowledge

- Distributed Systems Understanding: Familiarity with concepts like partitioning, replication, and eventual consistency

- Programming Proficiency: Strong skills in Python, Java, or Scala for pipeline development

- Cloud Platform Experience: Hands-on experience with AWS, GCP, or Azure services

- Machine Learning Fundamentals: Understanding of model training, inference, and deployment patterns

- Data Formats: Knowledge of Avro, Parquet, JSON, and Protocol Buffers

Infrastructure Requirements

- Compute Resources: Minimum 16 vCPUs and 64GB RAM for development environments

- Storage: High-IOPS SSD storage with at least 1TB capacity

- Network: Low-latency network connections (sub-millisecond preferred)

- Monitoring Stack: Prometheus, Grafana, or equivalent monitoring solutions

Software Dependencies

- Apache Kafka 3.0+ for message streaming

- Apache Spark 3.2+ or Apache Flink 1.14+ for stream processing

- Docker and Kubernetes for containerization and orchestration

- Apache Airflow or Prefect for workflow management

- MLflow or Kubeflow for ML model lifecycle management

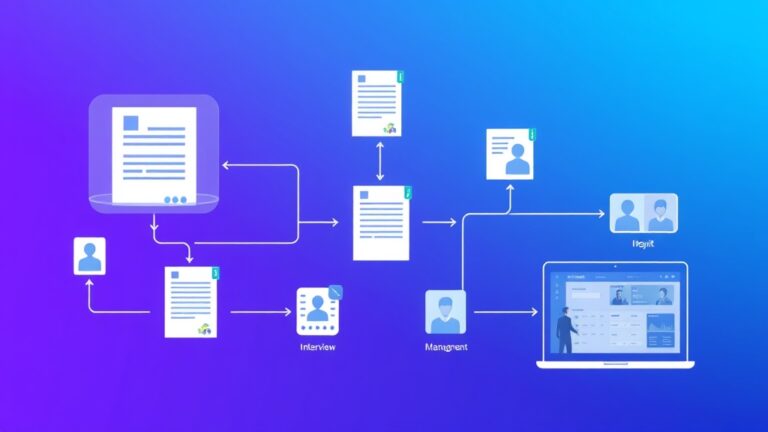

Architecture and Strategy Overview

Successful real-time data pipelines require careful architectural planning that balances performance, scalability, and reliability. The modern approach follows a lambda or kappa architecture pattern, depending on your specific requirements.

Lambda vs. Kappa Architecture

| Aspect | Lambda Architecture | Kappa Architecture |

|---|---|---|

| Complexity | High (dual processing paths) | Medium (single processing path) |

| Latency | Mixed (batch + real-time) | Consistent low latency |

| Data Consistency | Eventually consistent | Strongly consistent |

| Maintenance Overhead | High | Medium |

| Use Case | Historical analysis + real-time | Pure streaming workloads |

Core Components Architecture

A robust real-time AI pipeline consists of five essential layers:

- Data Ingestion Layer: Handles high-throughput data collection from multiple sources

- Message Streaming Layer: Provides durable, scalable message queuing and routing

- Stream Processing Layer: Executes real-time transformations and AI inference

- Storage Layer: Manages both hot and cold data storage requirements

- Serving Layer: Delivers processed results to downstream applications

Expert Tip: Design your architecture with failure isolation in mind. Each component should be able to fail independently without bringing down the entire pipeline. Implement circuit breakers and graceful degradation patterns from day one.

AI Integration Patterns

Integrating AI processing into real-time pipelines requires specific patterns to handle the computational overhead while maintaining low latency:

- Model Serving Pattern: Deploy lightweight models as microservices with auto-scaling capabilities

- Feature Store Pattern: Maintain real-time feature computation and caching for consistent model inputs

- A/B Testing Pattern: Route traffic between multiple model versions for continuous improvement

- Fallback Pattern: Implement rule-based fallbacks when AI models are unavailable

Detailed Implementation Steps

Step 1: Setting Up the Message Streaming Infrastructure

Apache Kafka serves as the central nervous system for real-time data pipelines. Configure Kafka with appropriate partitioning and replication settings:

# kafka-topics.sh --create --topic user-events

--bootstrap-server localhost:9092

--partitions 12

--replication-factor 3

--config retention.ms=86400000

--config segment.ms=3600000Key configuration considerations:

- Partition Count: Set to 2-3x your expected consumer parallelism

- Replication Factor: Use 3 for production environments

- Retention Policy: Balance storage costs with replay requirements

- Compression: Enable LZ4 or Snappy for network efficiency

Step 2: Implementing Stream Processing Logic

Apache Flink provides excellent performance for stateful stream processing with exactly-once semantics. Here’s a sample implementation for real-time user behavior analysis:

import org.apache.flink.streaming.api.scala._

import org.apache.flink.streaming.api.windowing.time.Time

import org.apache.flink.streaming.api.windowing.windows.TimeWindow

val env = StreamExecutionEnvironment.getExecutionEnvironment

env.setParallelism(12)

env.enableCheckpointing(5000)

val userEvents = env

.addSource(new FlinkKafkaConsumer[UserEvent]("user-events", new UserEventSchema(), properties))

.keyBy(_.userId)

.timeWindow(Time.minutes(5))

.aggregate(new UserBehaviorAggregator())

.addSink(new KafkaProducer[AggregatedEvent]("processed-events", new AggregatedEventSchema(), properties))Step 3: Deploying AI Model Inference

For real-time AI processing, deploy models using containerized microservices with auto-scaling capabilities. Tools like Airtable can help manage model metadata and deployment configurations in a structured format.

apiVersion: apps/v1

kind: Deployment

metadata:

name: fraud-detection-model

spec:

replicas: 5

selector:

matchLabels:

app: fraud-detection

template:

metadata:

labels:

app: fraud-detection

spec:

containers:

- name: model-server

image: fraud-detection:v1.2.3

ports:

- containerPort: 8080

resources:

requests:

memory: "2Gi"

cpu: "1000m"

limits:

memory: "4Gi"

cpu: "2000m"

env:

- name: MODEL_PATH

value: "/models/fraud_detection_v1.pkl"

- name: BATCH_SIZE

value: "32"Step 4: Implementing Feature Engineering

Real-time feature engineering requires careful design to minimize latency while ensuring feature consistency. Implement feature caching and precomputation strategies:

class RealTimeFeatureStore:

def __init__(self, redis_client, feature_ttl=3600):

self.redis = redis_client

self.ttl = feature_ttl

def get_user_features(self, user_id, event_timestamp):

cache_key = f"user_features:{user_id}"

cached_features = self.redis.get(cache_key)

if cached_features:

return json.loads(cached_features)

# Compute features if not cached

features = self.compute_user_features(user_id, event_timestamp)

self.redis.setex(cache_key, self.ttl, json.dumps(features))

return features

def compute_user_features(self, user_id, timestamp):

# Implement real-time feature computation logic

return {

"user_transaction_count_1h": self.get_transaction_count(user_id, timestamp, hours=1),

"user_avg_transaction_amount_24h": self.get_avg_transaction_amount(user_id, timestamp, hours=24),

"user_device_risk_score": self.get_device_risk_score(user_id)

}Step 5: Monitoring and Observability

Implement comprehensive monitoring using metrics, logs, and traces. Key metrics to track include:

- Throughput Metrics: Messages per second, processing rate, backlog size

- Latency Metrics: End-to-end latency, processing time percentiles

- Error Metrics: Error rates, retry counts, dead letter queue size

- Resource Metrics: CPU utilization, memory usage, network I/O

Use tools like Amplitude for tracking user behavior analytics and pipeline performance metrics in real-time dashboards.

Advanced Configuration and Optimization

Performance Tuning Strategies

Achieving optimal performance requires fine-tuning multiple system components:

- JVM Tuning: Configure G1GC with appropriate heap sizes for consistent low-latency performance

- Network Optimization: Use kernel bypass techniques like DPDK for ultra-low latency requirements

- Storage Optimization: Implement tiered storage with NVMe SSDs for hot data and object storage for cold data

- Parallelism Tuning: Balance parallelism levels to avoid resource contention while maximizing throughput

Scaling Patterns

Implement horizontal scaling patterns that can handle traffic spikes and growth:

Best Practice: Design your pipeline to scale individual components independently. Use auto-scaling groups with custom metrics like queue depth and processing latency to trigger scaling decisions automatically.

| Component | Scaling Trigger | Target Metric | Scale-out Time |

|---|---|---|---|

| Kafka Consumers | Consumer lag > 10,000 | Messages/second | 30 seconds |

| Stream Processors | CPU > 70% | Processing latency | 60 seconds |

| Model Servers | Response time > 100ms | Inference latency | 45 seconds |

| Feature Store | Cache hit rate < 85% | Feature lookup time | 20 seconds |

Troubleshooting Common Issues

High Latency Problems

Symptom: End-to-end latency exceeding SLA requirements (>500ms for most use cases)

Common Causes and Solutions:

- Network Bottlenecks: Monitor network utilization and implement connection pooling

- Garbage Collection Pauses: Tune JVM GC settings and consider using low-latency collectors

- Inefficient Serialization: Switch from JSON to Avro or Protocol Buffers for better performance

- Database Contention: Implement read replicas and connection pooling

Data Quality Issues

Symptom: Inconsistent or corrupted data in downstream systems

Diagnostic Steps:

- Implement schema validation at ingestion points

- Add data quality checks in stream processing logic

- Monitor data freshness and completeness metrics

- Implement circuit breakers for upstream data sources

Scaling Bottlenecks

Symptom: System performance degrades under increased load

Resolution Strategies:

- Identify hot partitions and implement better key distribution

- Optimize resource allocation based on actual usage patterns

- Implement backpressure mechanisms to prevent system overload

- Use load testing tools to identify breaking points before production deployment

Model Performance Degradation

Symptom: AI model accuracy drops over time

Mitigation Approaches:

- Implement continuous model monitoring and drift detection

- Set up automated retraining pipelines triggered by performance thresholds

- Use A/B testing frameworks to validate new model versions

- Maintain model performance baselines and alerting thresholds

Security and Compliance Considerations

Real-time data pipelines often process sensitive information requiring robust security measures:

Data Encryption and Privacy

- Encryption in Transit: Use TLS 1.3 for all network communications

- Encryption at Rest: Implement AES-256 encryption for stored data

- Data Masking: Apply field-level encryption for PII data

- Access Controls: Implement RBAC with principle of least privilege

Compliance Requirements

Ensure your pipeline meets regulatory requirements like GDPR, HIPAA, or PCI DSS by implementing:

- Audit logging for all data access and modifications

- Data retention policies with automated purging

- Right to be forgotten capabilities

- Data lineage tracking for compliance reporting

Cost Optimization Strategies

Real-time pipelines can be expensive to operate. Implement these cost optimization strategies:

Resource Optimization

- Right-sizing: Use monitoring data to optimize instance sizes and types

- Spot Instances: Leverage spot instances for non-critical batch processing components

- Auto-scaling: Implement aggressive auto-scaling policies to minimize idle resources

- Reserved Capacity: Use reserved instances for predictable baseline workloads

Storage Cost Management

- Implement intelligent tiering policies for historical data

- Use compression algorithms optimized for your data types

- Set up automated data lifecycle management

- Monitor and optimize data retention policies regularly

Consider using workflow automation tools like those available through Bubble to create cost monitoring dashboards that provide real-time visibility into pipeline expenses.

Next Steps and Advanced Topics

Once you have a basic real-time pipeline operational, consider these advanced topics for further optimization:

Multi-Cloud and Edge Computing

- Implement multi-cloud strategies for disaster recovery and cost optimization

- Deploy edge computing nodes for ultra-low latency requirements

- Use CDN integration for global data distribution

Advanced AI Integration

- Implement online learning systems for continuous model improvement

- Use reinforcement learning for dynamic pipeline optimization

- Deploy federated learning for distributed model training

Recommended Resources

- Books: “Streaming Systems” by Tyler Akidau, “Designing Data-Intensive Applications” by Martin Kleppmann

- Courses: Apache Kafka certification, Google Cloud Professional Data Engineer

- Communities: Apache Kafka community, Flink Forward conference

- Tools: Confluent Platform for managed Kafka, Databricks for unified analytics

Frequently Asked Questions

What’s the difference between real-time and near real-time processing?

Real-time processing typically refers to systems that process data within milliseconds (sub-second latency), while near real-time usually means processing within seconds to minutes. True real-time systems are required for applications like fraud detection and algorithmic trading, while near real-time is sufficient for applications like recommendation engines and monitoring dashboards.

How do I choose between Apache Kafka and Amazon Kinesis for message streaming?

Apache Kafka offers more flexibility and control but requires more operational overhead. Choose Kafka when you need maximum throughput, complex routing logic, or multi-cloud deployments. Amazon Kinesis is better for AWS-native environments where you want managed services with less operational complexity. Kafka typically offers better price-performance at scale, while Kinesis provides easier setup and management.

What are the key metrics to monitor in a real-time AI pipeline?

Focus on four categories of metrics: (1) Throughput metrics like messages/second and processing rate, (2) Latency metrics including end-to-end latency and model inference time, (3) Quality metrics such as model accuracy and data freshness, and (4) Resource metrics covering CPU, memory, and network utilization. Set up alerting thresholds at 80% of your SLA requirements to catch issues before they impact users.

How can I ensure data consistency in distributed real-time systems?

Implement eventual consistency patterns with idempotent operations and proper event ordering. Use techniques like event sourcing, CQRS (Command Query Responsibility Segregation), and saga patterns for complex workflows. Design your system to handle duplicate events gracefully and implement proper retry mechanisms with exponential backoff. Consider using distributed consensus algorithms like Raft for critical consistency requirements.

Building production-ready real-time data pipelines with AI processing requires careful planning, robust architecture, and continuous optimization. The investment in proper design and implementation pays dividends in system reliability, performance, and maintainability. If you’re looking to accelerate your real-time pipeline implementation or need expert guidance on complex architectural decisions, consider leveraging futia.io’s automation services to build scalable, intelligent data processing systems tailored to your specific business requirements.

🛠️ Tools Mentioned in This Article